Apr 15, 2026

Trust Issues With Coding Agents? Use a Plan-First, Patch-Second Workflow

A low-trust, high-speed workflow for AI coding agents: require a plan, isolate execution, checkpoint changes, review diffs, and capture the decision log before merging.

Distrusting coding agents is rational. They can edit quickly, miss local conventions, and create plausible-looking regressions. The answer is not blind trust or total avoidance. The answer is an AI coding workflow that lets coding agents move fast inside clear boundaries.

A plan-first, patch-second workflow gives you that boundary. The agent must explain the goal, scope, files, risks, and tests before it edits. Then you review the patch against the plan instead of trying to infer intent from a pile of diffs.

The Core Rule

Every agent run should have two phases:

- Plan first: explain the intended change, files likely to change, risk, and verification.

- Patch second: edit only inside that scope, then report what changed and how it was checked.

This creates a reviewable contract. If the patch touches files the plan did not mention, you have a concrete reason to pause. If the plan misses an obvious risk, you catch it before code changes.

The Workflow

1. Require a Short Plan Before Edits

Make the first response boring and specific. You want a scoped plan, not a motivational essay. Ask for touched files, assumptions, test strategy, and a stop condition.

`Before editing, reply with: - Goal in one sentence - Files or modules you expect to touch - Files you will not touch - Main risk - Verification plan - Question if scope is unclear After I approve, make the smallest patch that satisfies the goal.`

2. Keep One Execution Lane Per Task

Do not let multiple agents modify the same files unless you deliberately split ownership. One agent can implement, another can review, but two implementers touching the same module usually create merge noise and unclear accountability.

If you do run multiple agents, use Channel Chat or Channel Templates to make roles explicit: planner, implementer, reviewer, tester. Each agent needs a lane.

3. Checkpoint Before Risky Edits

Before a large refactor, create a checkpoint commit or worktree. This changes the emotional cost of experimentation. You can let the agent try an approach because rollback is cheap.

1DevTool's Git Worktrees support helps isolate parallel attempts. A hotfix, refactor, or agent experiment can run in its own working copy without disturbing your current branch state.

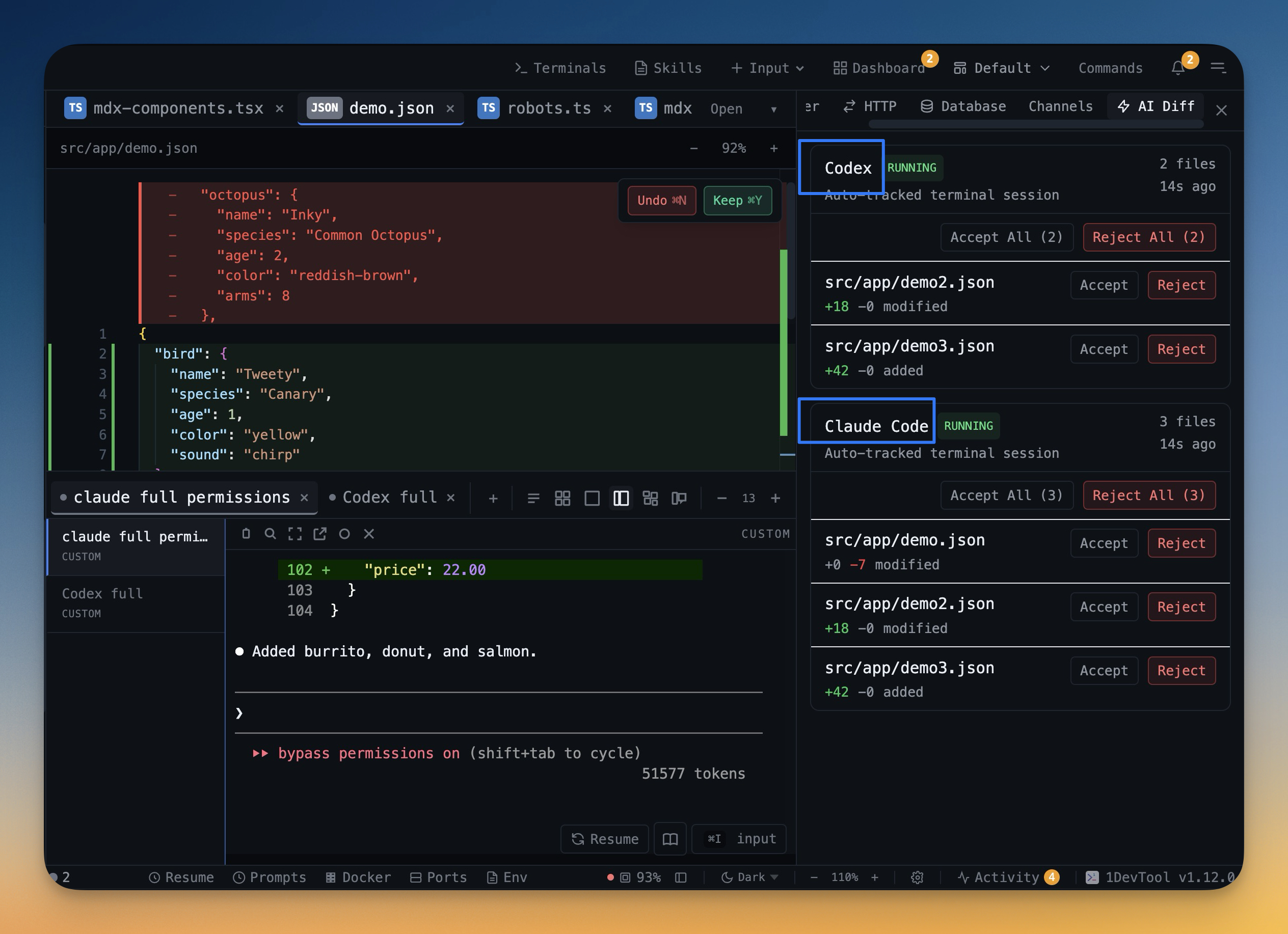

4. Review Agent Diffs Before Accepting

Agent output is not evidence. The diff is evidence. Review changed files directly, with a way to accept good files and revert bad ones.

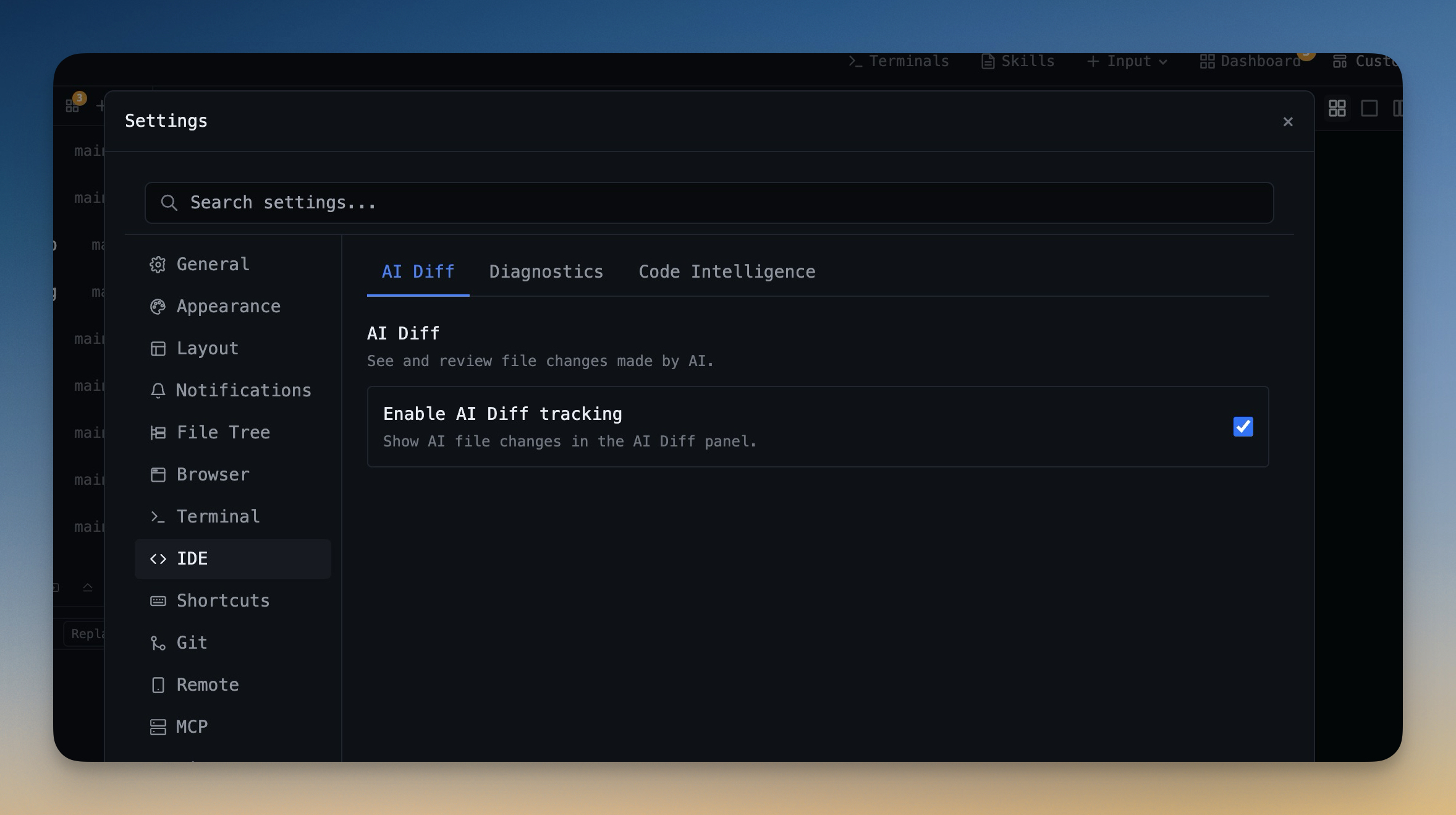

AI Diff tracks file modifications made by AI agents and groups them into reviewable sessions. Use per-file Accept and Revert when a run is partially good, and bulk controls only when the whole run is clearly safe or clearly wrong.

5. Capture a PR Decision Log

The final artifact should explain why the patch exists, not just what changed. This matters for teammates and for your future self when a regression appears later.

`PR decision log: Goal: <user-visible or technical goal> Scope: <files/modules intentionally changed> Out of scope: <what was deliberately not changed> Tradeoff: <choice made and why> Tests: <commands run or manual checks> Risk: <remaining risk> Rollback: <how to undo if needed>`

What to Review Manually

Some areas should always get human review, even when the agent looks confident:

- Authentication, authorization, permissions, and token handling.

- Payment, billing, account deletion, and irreversible user actions.

- Database migrations and data transformation scripts.

- Shared abstractions used across many modules.

- Build, release, deployment, and infrastructure configuration.

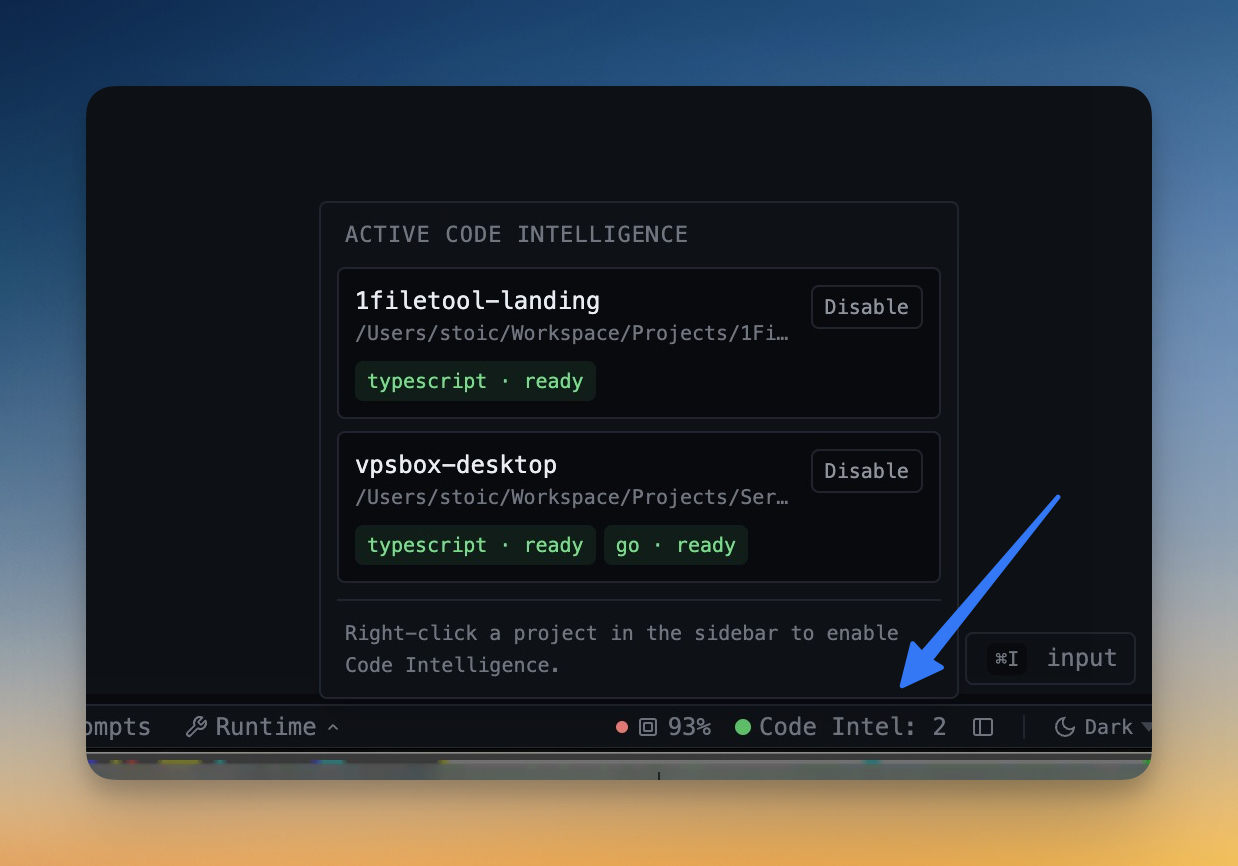

Use Code Intelligence as a Second Signal

A diff can look right while the project is still broken. Pair review with diagnostics from a real project-aware language engine. 1DevTool's Code Intelligence runs language servers for projects you opt into, so TypeScript, Python, Go, Rust, and other diagnostics are based on real project files instead of incomplete editor guesses.

Common Mistakes

- Letting agents patch immediately: you lose the chance to catch bad scope early.

- Huge unscoped prompts: the agent succeeds at something, but not necessarily your thing.

- Reviewing only summaries: agent summaries are useful, but the diff is the source of truth.

- No rollback boundary: fear rises because undo is unclear.

The goal is not to make coding agents harmless. The goal is to make their work inspectable, scoped, and reversible. Plan first, patch second, review the AI Diff, and keep a decision log when the change matters.

Use 1DevTool when you want AI terminals, channel workflows, AI Diff, Git Worktrees, and project-aware diagnostics in the same review loop.

Related in the StoicSoft network

If you regularly stitch together PDF, image, video, or batch-file workflows like the ones above, 1FileTool is the StoicSoft network's purpose-built desktop app — 245+ local-first tools, pay-once, files never leave the device.